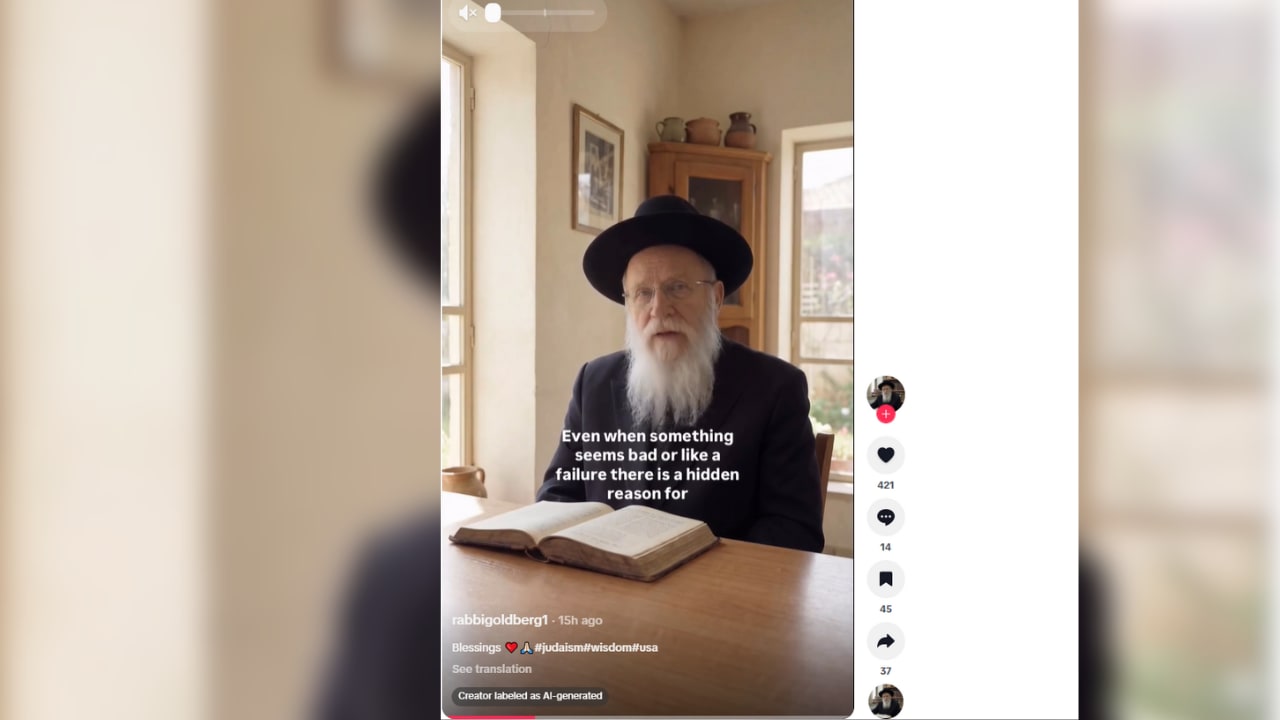

A new report by the Combat Antisemitism Movement (CAM) has uncovered a coordinated network of fake, AI-generated “rabbis” spreading antisemitic tropes across TikTok, raising fresh concerns about the role of artificial intelligence in amplifying online hate.

The study, conducted by CAM’s Antisemitism Research Center (ARC), identified at least 49 TikTok accounts posing as Jewish religious figures while disseminating conspiracy theories and classic antisemitic narratives to a vast global audience. Together, these accounts have amassed more than 950,000 followers and generated over 10 million likes, underscoring the scale and reach of the phenomenon.

According to the report, the accounts use AI-generated avatars and fabricated identities to mimic authoritative Jewish voices, lending credibility to content that recycles long-standing antisemitic tropes. Researchers who analyzed several of the accounts found consistent messaging patterns and narrative structures, suggesting coordination rather than isolated activity.

Among the accounts examined were profiles such as @rabbirothstein and @rabbi_silverstein, which presented themselves as authentic rabbis while promoting harmful and misleading claims about Jews. By framing these ideas as “insider truths,” the content seeks to normalize antisemitism and make it more palatable to mainstream audiences.

CAM warned that this tactic represents a dangerous evolution in digital hate. By disguising antisemitic rhetoric as coming from within the Jewish community, the campaign undermines public trust and blurs the line between authentic voices and manipulated content.

“The danger is clear,” the report stated. “By masquerading as authentic Jewish voices, these ‘rabbis’ erode trust, normalize hatred, and incite real-world violence targeting Jews.”

The findings also highlight TikTok’s vulnerability as a platform with a predominantly young user base. Researchers warned that exposure to such content could accelerate radicalization, particularly as AI-generated media becomes increasingly difficult to detect.

This is not the first time such tactics have been identified. Previous CAM research documented similar networks of AI-generated “rabbis” on Instagram, where more than 70 accounts were found spreading antisemitic narratives. In that case, Meta took steps to remove the accounts following engagement with CAM.

CAM is now calling on TikTok to take similar action and adopt more robust safeguards against AI-driven disinformation.

The report comes amid growing concern over the intersection of artificial intelligence and online extremism. Experts warn that AI tools are enabling the mass production of highly convincing content that can rapidly go viral, making it harder for platforms and users alike to distinguish fact from fabrication.

Indeed, recent examples have shown how AI-generated personas can attract millions of followers by blending traditional antisemitic stereotypes with modern conspiracy theories, often under the guise of humor or “education.”

More broadly, social media algorithms have been criticized for amplifying such content. Research has shown that platforms tend to promote material that generates strong engagement—often the most provocative or extreme posts—creating a feedback loop that rewards the spread of hate.

For CAM and other advocacy groups, the emergence of AI-powered antisemitism represents a new front in an already intensifying battle over online narratives.

“This is not just another iteration of online hate,” the report suggests. “It is a technologically enhanced campaign designed to manipulate perception at scale.”

To read the full report, click here